Yes. You can get banned from Character AI. You can also get warned, filtered, restricted, or suspended in ways that feel like a ban even when your account still exists.

That difference matters. A blocked message is not the same as a banned account. A refusal loop is not always proof that your profile is gone. And if you use AI chat apps for roleplay, comfort, creator testing, persona work, or long story threads, moderation confusion stops being a minor annoyance fast. It becomes a control problem.

One refused message can do it. One weird warning. One bot that suddenly feels off, and now you are wondering whether months of chats, character tuning, or creative notes can vanish because a platform moved the line. That is the real issue.

This guide explains what banned can mean on Character AI, what usually triggers moderation, why private does not mean exempt, and how to think about platform risk before you put more time, emotion, or work into a system you do not control.

Quick answer: yes, Character AI can ban accounts. But not every block means a ban

If you are asking, Can you get banned from Character AIThe short answer is yes. Character AI can act against accounts and content that break its rules. That can include blocked messages, warnings, feature limits, suspensions, and, in more serious cases, account termination.

However, many users call every bad moderation experience a ban. That is where the panic starts. If a message gets blocked, a bot stops responding the way it did yesterday, or one chat keeps hitting filters, you may be dealing with content enforcement rather than account-level punishment.

Still, do not shrug it off. Repeated boundary-pushing can add up. Public bots usually create more exposure than private chats. And if you treat any third-party AI app like guaranteed permanent access, you are building on rented ground.

Anything else will not hold.

What banned can actually mean on Character AI

Most readers land here in one of two states: either they saw a warning and want to know how bad it is, or they got no clear warning at all and are trying to decode a vague failure. Those can look similar from the outside. They are not the same.

| What it looks like | What it usually means | Do you still have access? | What to do next |

|---|---|---|---|

| One message blocked or refused | A content filter stopped that prompt or response | Usually yes | Do not keep hammering the same prompt; step back and change direction |

| Repeated filtered replies in one chat | That conversation keeps hitting moderation boundaries | Usually yes | Assume the topic is risky; repeated retries can raise the stakes |

| Warning notice or policy message | The platform detected behavior it sees as a violation or near-violation | Usually yes, at least for now | Review recent behavior, save evidence, and stop testing limits |

| Temporary restriction or suspension | Account-level action, often time-limited but not always clearly explained | Partial or no access | Check official notices and use support channels |

| Account termination or login loss tied to enforcement | The highest-level action; access may be removed | No, or effectively no | Appeal through official support and document everything |

The lower steps still matter. A blocked message is smaller than a full character ai banned account, yet it still shows where the platform’s comfort line sits. If you keep pushing after that signal, the story can change.

This is where almost everyone loses: they treat moderation like a puzzle to beat instead of a system to read.

What usually triggers Character AI moderation

No honest article can give you a magic threshold like three prompts equals suspension. Platforms rarely explain enforcement that cleanly. Moderation can be automated, vague, and context-sensitive. Even so, you can map the common risk areas and see what tends to escalate.

At a high level, platforms usually reserve the right to remove content, restrict accounts, or terminate access under their terms of service. If you want the legal baseline for how online services frame those powers, see the Terms of service overview. For broader safety and abuse concepts around online moderation, the Content moderation overview Is also useful background.

| Behavior | Typical platform response | Risk level | Why it escalates |

|---|---|---|---|

| Explicit sexual prompting | Blocked replies, refusals, possible warning | Medium to high | A common trigger area that users often keep testing |

| Repeated filter bypass attempts | Stronger blocking, possible account attention | High | Looks deliberate rather than accidental |

| Harassment, hate speech, abusive language | Warnings, restrictions, possible suspension | High | A direct safety issue |

| Violent, self-harm, or illegal-roleplay edge cases | Safety intervention, blocked content, possible review | Medium to high | Higher harm sensitivity even when framed as fiction |

| Spam, scams, botting, or abusive automation | Restriction or suspension | High | Platform abuse, not only content risk |

| Impersonation or deceptive public bot creation | Bot removal, account action | Medium to high | Trust and misuse problems, especially in public discovery |

| Public bot greetings or descriptions with prohibited content | Content takedown, creator-side penalties | High | Public visibility creates more moderation surface |

| Bug exploitation or security abuse | Serious enforcement, possible termination | Very high | Moves from rule-breaking into platform integrity risk |

Those are the practical buckets. Severity matters, yes, but intent, repetition, and visibility matter too. One clumsy prompt may get blocked. A pattern of trying to outsmart the system can look like deliberate evasion. That difference is often bigger than users want to admit.

NSFW prompts and sexual roleplay: common trigger, but not the whole story

Explicit sexual content is one of the most common reasons people run into Character AI filters. That much is clear from user reports and search behavior. However, articles that reduce everything to do not sext the bot miss the wider picture.

Users can also run into trouble through repeated edge-testing, risky public bot setups, abusive behavior, deceptive character design, spam, or attempts to force blocked outcomes. In other words, the issue is not only what you asked for once. It is whether the pattern starts to look intentional, public, harmful, or abusive.

So if your question is, can you get banned from Character AI for NSFW chats, the practical answer is yes. Sexual content can trigger filters and may contribute to stronger enforcement, especially if you keep pushing after obvious limits appear.

That is the trap.

Filter bypass attempts often carry more risk than one bad prompt

Many people assume the dangerous part is the original prompt. Often, the riskier part is what happens after the first block.

If you keep retrying with coded words, typo tricks, broken spelling, layered jailbreak prompts, or innocent rewrites aimed at the same result, you are no longer in accident territory. You are showing intent. Platforms may tolerate one bad message poorly; however, they tend to react even more sharply when behavior starts to look like a campaign against the filter itself.

Picture two users. One sends an explicit prompt, gets blocked, and moves on. The other spends twenty minutes trying aliases, metaphors, loopholes, and wrappers to force the same scene through. Those are not the same signal, even if each individual attempt looks mild on its own.

If you want a simple rule, use this: one awkward prompt can be a mistake; repeated bypass attempts look like strategy.

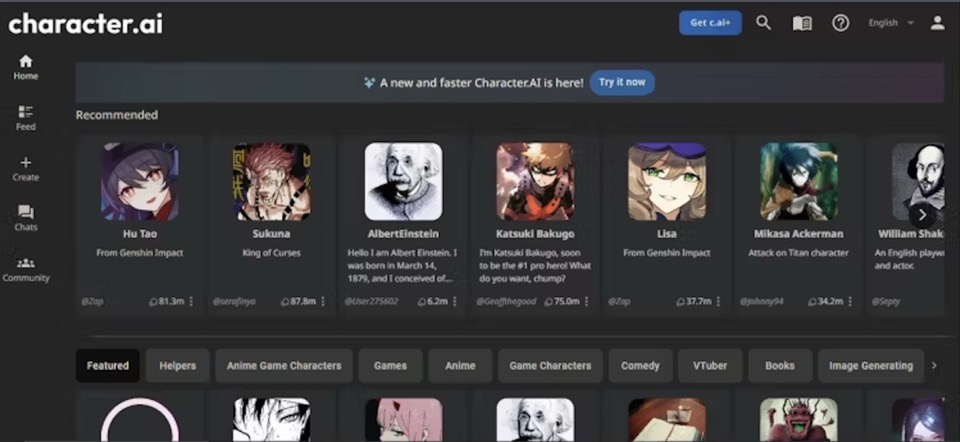

Public bots create more exposure than private chats

This point gets missed all the time. If you create public characters, greetings, descriptions, personas, or discoverable roleplay setups, you usually have more moderation exposure than someone chatting quietly one-to-one.

Why? Because public content is visible, reportable, and easier for platform systems to scan at scale. A risky greeting or bot description is not buried inside one conversation. It becomes a published asset tied to your account.

That matters for creators in particular. Plenty of users are not only chatting for fun. They are testing character ideas, building recurring personas, shaping audience-facing bots, or experimenting with formats they may later use in communities, content, or paid offers. If that sounds familiar, creator-side moderation risk is the real issue. Your public setup can attract attention much faster than a private chat log.

Can private chats still get you in trouble?

The safest answer is the one many users do not want: private does not mean exempt.

Private chats may feel personal. They still happen inside someone else’s platform, under someone else’s rules, and through someone else’s moderation systems. That does not mean every private exchange is reviewed by a human. It does mean policy can still apply.

This is a line worth accepting early. If your model is nobody sees this, so I am safe, you are leaning on a fantasy. Private may lower visibility compared with a public bot. It does not remove enforcement risk.

Think of it like rented studio space. The door can be closed; the building owner still writes the rules.

And that is why so many heavy users get blindsided. They confuse privacy with ownership. Those are completely different things.

Most people ask can I get banned — the better question is how dependent am I on one platform?

The ban question is real. It is also too small.

If you use Character AI casually, a warning or blocked message is mostly just annoying. If you use it heavily, emotionally, creatively, or as part of creator work, moderation is only one layer of risk. The deeper problem is dependence.

Maybe you built a long-running roleplay world. Maybe you use one character as a writing partner. Maybe you test dialogue styles for streaming bits, fan engagement, companion products, or audience experiments. Maybe the app has simply become part of your daily rhythm. In each version, the same truth shows up: if continuity matters to you, the platform holds more power than you think.

That power does not always arrive as a dramatic ban screen. Sometimes it shows up as vaguer things: more filtering, lower output quality, changed rules, missing access, slower support, or a bot that no longer works the way your workflow depends on. Death by friction is still loss.

Once you see that, the next question gets sharper. Instead of only asking how to avoid punishment, you start asking what part of your stack you actually control.

That is the better question. It leads somewhere.

Why users misread a filter as a ban

Part of the confusion is simple: moderation UX is often bad. Systems do not explain themselves with the clarity users want. You see a refusal, a chat behaves differently, or a feature seems to disappear, and your brain jumps straight to the worst-case story.

A single blocked response can feel like your account was flagged. Meanwhile, a bot that suddenly feels flatter can make you think punishment happened behind the scenes. Then a login issue appears, and now every recent risky conversation looks suspicious in hindsight.

Sometimes that fear is wrong. Sometimes it is not. The point is that vague moderation creates rumor, and rumor pushes people into either panic or denial.

Here is a common scenario: a creator builds a public character with suggestive subtext in the greeting, edits it a few times, notices stranger behavior, gets weaker responses, and concludes, my character is shadowbanned. Maybe. Or maybe the content is being constrained at the model or policy layer. From the outside, those can feel identical.

Therefore, you need a decision framework, not guesswork.

A simple risk ladder: low, medium, and high-risk behavior

If you want a cleaner way to judge your own situation, use six factors: severity, intent, frequency, visibility, impact, and recoverability. This will not give you certainty. It will give you a much better read than panic threads and random anecdotes.

Low risk usually looks like one clumsy prompt, one isolated wording mistake, or one accidental edge phrase without repeated pushing after a block. Medium risk starts to look like repeated boundary testing, grey-area roleplay, risky public descriptions, or a pattern that shows you keep pressing the same line. High risk is where behavior looks clearly deliberate or harmful: filter evasion, harassment, hate, scams, spam, exploit attempts, or public prohibited bots tied to your account.

Now turn that into a quick self-check. Was the content severe? Did it look intentional? Did it happen once or keep happening? Was it private, or was it attached to a public bot or profile? Could other users be affected? And if action happens, are you looking at a blocked message, a warning, a suspension, or a total loss of access?

This shift matters because it moves you from emotion to diagnosis. When people feel powerless, they get reckless. Once they can read the situation, they usually make better choices.

If you think you were banned, do this first

Do not start by making a new account. Do not start by rage-posting. And do not start by assuming the platform erased everything. First, figure out what actually happened.

Check for official notices in email, app messages, or account alerts. Confirm whether the problem is message-level, feature-level, or account-level. Review the last things you did: prompts, edits, public bot changes, spammy behavior, repeated retries. Take screenshots and note timestamps while you still can. Use official support or appeal channels instead of rumor threads. And stop testing the same risky behavior while the issue is unresolved.

This sounds basic. It prevents a common spiral. People who are unsure whether they hit a filter or a true enforcement action often make it worse by checking the boundary again and again. That is like smelling smoke and throwing paper at it to see if the fire is real.

If you still have access to important chats, notes, or bot copy, save what you can. Quietly. Immediately.

What to include in an appeal

Appeals work better when they are calm, specific, and easy to review. Keep it clean.

State what happened and when it happened. Describe what you think triggered the action. Add screenshots, timestamps, and any useful account details. If you crossed a line, say so plainly instead of playing games. Ask for review or clarification in direct language.

Do not write an emotional essay. Do not open with an argument about the rules. And do not send five conflicting explanations. Support teams move faster on clean cases than chaotic ones.

Also, be realistic. Appeal outcomes may be slow, limited, or unclear. Because of that, building your whole routine around one app is a bad bet if continuity matters to you.

For creators, streamers, and heavy users, the real cost is lost continuity

This is the part many generic posts never reach, and for serious users it is the part that matters most.

Losing an AI account is not only about losing access to a toy. For some people, it means losing a long-running story world, a comfort routine, a scripted persona, a bot they were refining for community use, or a pile of conversations that fed other work. If you are creator-minded, that continuity has value.

Real value.

One week of friction is annoying. Losing months of iteration is expensive. Losing a format that was turning into a real asset is worse. This is how people quietly burn momentum: they build on rented ground, then act shocked when the landlord redraws the map.

There is also upside here, and it is bigger than damage control. Once you stop treating AI chat apps like permanent homes and start treating them like tools you compare, test, and back up, your options open up fast. You can choose for fit instead of habit. You can build workflows that survive policy shifts. You can turn rough experiments into durable systems instead of fragile threads trapped in one app.

That is where growth starts.

Trade-off: keep using Character AI, or compare alternatives?

This does not need to become a dramatic breakup speech. Character AI may still work for you if your use is casual, low-risk, and not central to your emotional routine or creator workflow. Plenty of people can live with moderation limits because they are not building much on top of the platform.

However, the trade-off changes once your investment grows.

If you want more predictability, more control over boundaries, less moderation guesswork, or a better fit for your use case, comparing other platforms becomes the smart move. Not because Character AI is uniquely bad. Instead, because platform dependence gets expensive when the platform no longer matches what you need.

A simple decision framework helps. Keep using Character AI if you mostly want casual chats, can tolerate filters, and would not be seriously hurt by losing continuity. Start comparing alternatives if you care about creator control, roleplay flexibility, emotional continuity, or reducing moderation surprises before they wreck your habits or projects.

If you are in that second group, the best next move is comparison, not another rumor thread. Modelnet’s Alternative to Replika: Best Options to Compare in 2026 is useful even if Character AI is your starting point, because the real question is no longer one app. It is what kind of AI platform you can actually build around.

And if you want a wider look first, the guide to AI roleplay sites can help you see the field before you lock yourself deeper into one ecosystem.

If you are not just chatting for fun, but thinking about AI characters as a product, platform dependence becomes a business risk. Rules can change, filters can tighten, and the character experience you build inside someone else’s app can disappear or stop matching your audience’s expectations. For creators, founders, and adult AI businesses, the smarter move is to build the AI experience on infrastructure you control.

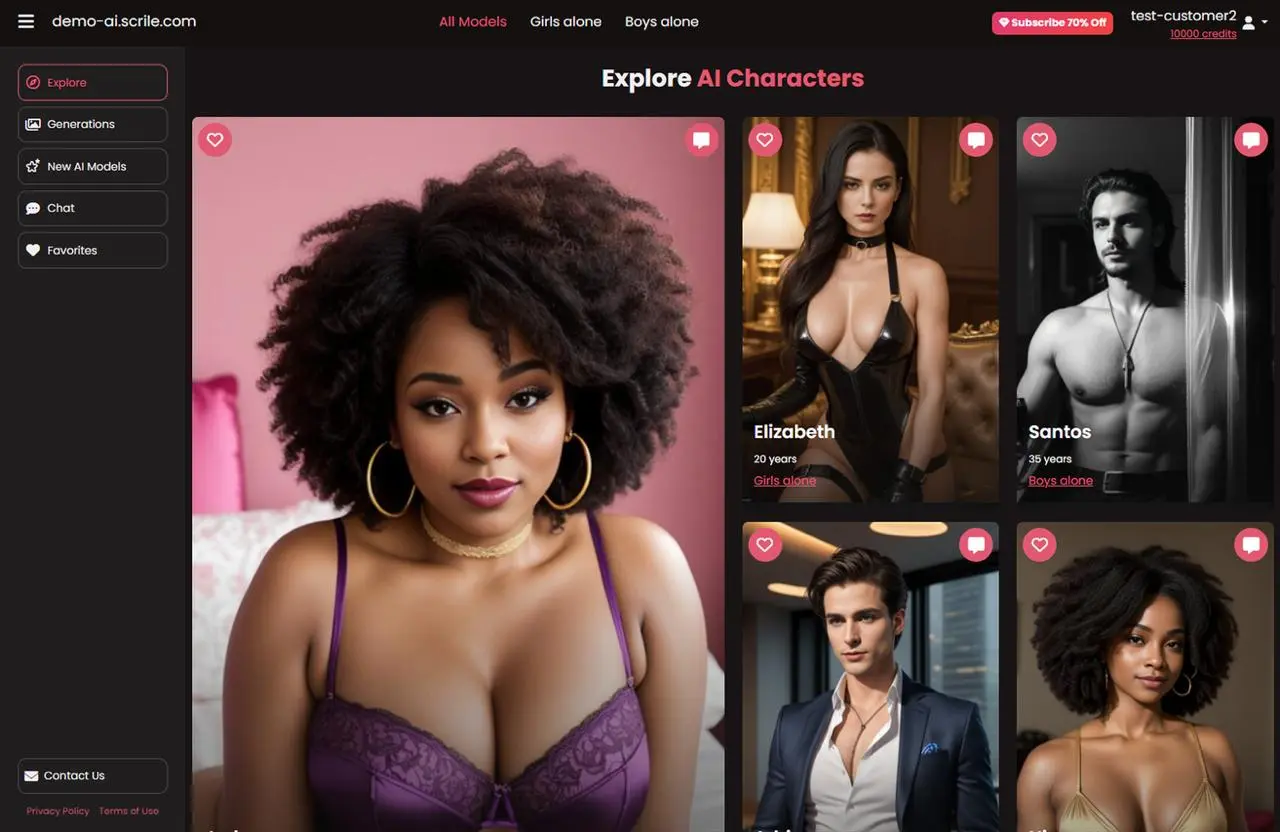

Scrile AI helps you launch your own AI companion platform with interactive AI characters, roleplay chat, AI image generation, subscriptions, token-based monetization, paid image access, admin tools, privacy controls, and brand customization. Instead of depending on Character AI or another third-party app, you can create a branded AI chatbot business where you control the characters, monetization model, user experience, and platform rules.

Better habits if you rely on any AI chat platform

The strongest move is not fear. It is better operating habits.

Read current rules before you push edge cases. Do not assume private means safe. Treat repeated jailbreak attempts as compounding risk, because that is usually what they are. Keep public bots cleaner than your instincts tell you to. Save writing, prompts, character notes, and important threads outside the app when possible. And if a platform starts mattering to your routine, compare options before the dependence becomes invisible.

It also helps to understand the broader consumer-risk side of the internet. Public agencies such as the Federal Trade Commission regularly publish guidance around deceptive practices, data handling, and platform accountability. Even when a moderation decision is not a legal issue, the bigger lesson is the same: if your access, data, or workflow lives inside a service you do not control, you need a backup mindset.

| Risk | Character AI example | Why it matters | Mitigation step |

|---|---|---|---|

| Moderation ambiguity | A blocked chat feels like a ban, but you get little explanation | Confusion leads to panic or reckless retries | Separate message blocks from account actions and document what happened |

| Platform lock-in | Your favorite bots, chats, or routines live in one app | Access changes can break continuity overnight | Back up important material and avoid relying on one platform alone |

| Public creator exposure | A visible bot greeting or description draws reports or enforcement | Public assets create more risk than private experiments | Keep public-facing bots within safer boundaries than private use |

| Repeated boundary testing | You keep trying to beat the filter with new phrasing | Patterns look more deliberate than one mistake | Stop after the first clear block instead of escalating |

That is how you keep more ownership.

The bigger opportunity here is not merely avoiding a Character AI banned account. It is becoming the kind of user or creator who does not get trapped by one company’s moderation ambiguity in the first place. That difference can sound abstract until access breaks, support goes quiet, or a project you thought was yours turns out to live entirely on someone else’s terms.

So yes, Character AI can ban accounts, and yes, certain behavior raises that risk fast. But the stronger takeaway is larger than rule avoidance: learn the enforcement ladder, stop confusing filters with full bans, reduce deliberate risk, save what matters, and compare platforms before you hand one app too much control over your continuity.

That is the next move. Make it before you need it.

Frequently asked questions

Can private chats or roleplay on Character AI still lead to a warning, suspension, or ban?

Yes. Private chats may have less visibility than public bots, but they still happen under the platform’s rules and moderation systems. Roleplay, sexual content, abusive language, or repeated boundary-pushing in private can still trigger warnings, restrictions, or stronger account action.

What’s the difference between a blocked message, a filtered response, a temporary restriction, and a real Character AI ban?

A blocked message or filtered reply usually means a specific prompt or chat hit a content boundary, while your account still exists. A temporary restriction or suspension is an account-level penalty that may limit access for a period of time. A real ban usually means enforcement has escalated to account termination or effective loss of access.

Can you get banned from Character AI for trying to bypass the filter with coded words, jailbreak prompts, or repeated retries?

Yes, that can raise your risk. One blocked prompt may be treated as a mistake, but repeated retries with coded wording, typo tricks, or jailbreak-style prompts can look intentional. Platforms often react more strongly to evasion patterns than to a single failed message.

How do you appeal a Character AI ban or suspension, and what evidence should you include to improve your chances?

Use the official support or appeal channel and keep your message clear, calm, and specific. Include your account details, approximate timeline, screenshots of warnings or errors, and a short explanation of what happened without arguing emotionally. If the action was a misunderstanding, concise evidence and a cooperative tone usually help more than long complaints.

When does it make sense to keep using Character AI versus switching to another AI platform if you want fewer moderation surprises or more control over your chats?

It makes sense to stay if Character AI still fits your goals and occasional filtering is manageable for you. If you rely on long roleplay threads, creator testing, or more predictable control over how chats and bots behave, comparing other platforms may be the smarter move. A practical next step is to review an Alternative to Replika: Best Options to Compare in 2026 at https://modelnet.club/blog/best-replika-alternatives-guide/.

Are public bots more likely to trigger moderation than private conversations?

Usually, yes. Public bot names, greetings, descriptions, and discoverable setups create more exposure because they are easier to scan, report, and review at scale. If you publish characters, creator-side moderation risk is often higher than in a private one-to-one chat.

Polina Yan is a Technical Writer and Product Marketing Manager at Scrile, specializing in helping creators launch personalized content monetization platforms. With over five years of experience writing and promoting content for Scrile Connect and Modelnet.club, Polina covers topics such as content monetization, social media strategies, digital marketing, and online business in adult industry. Her work empowers online entrepreneurs and creators to navigate the digital world with confidence and achieve their goals.